|

7/30/2023 0 Comments Random forest machine learning

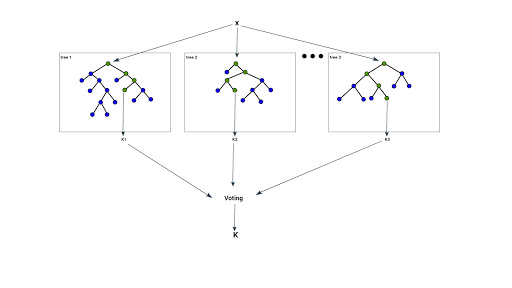

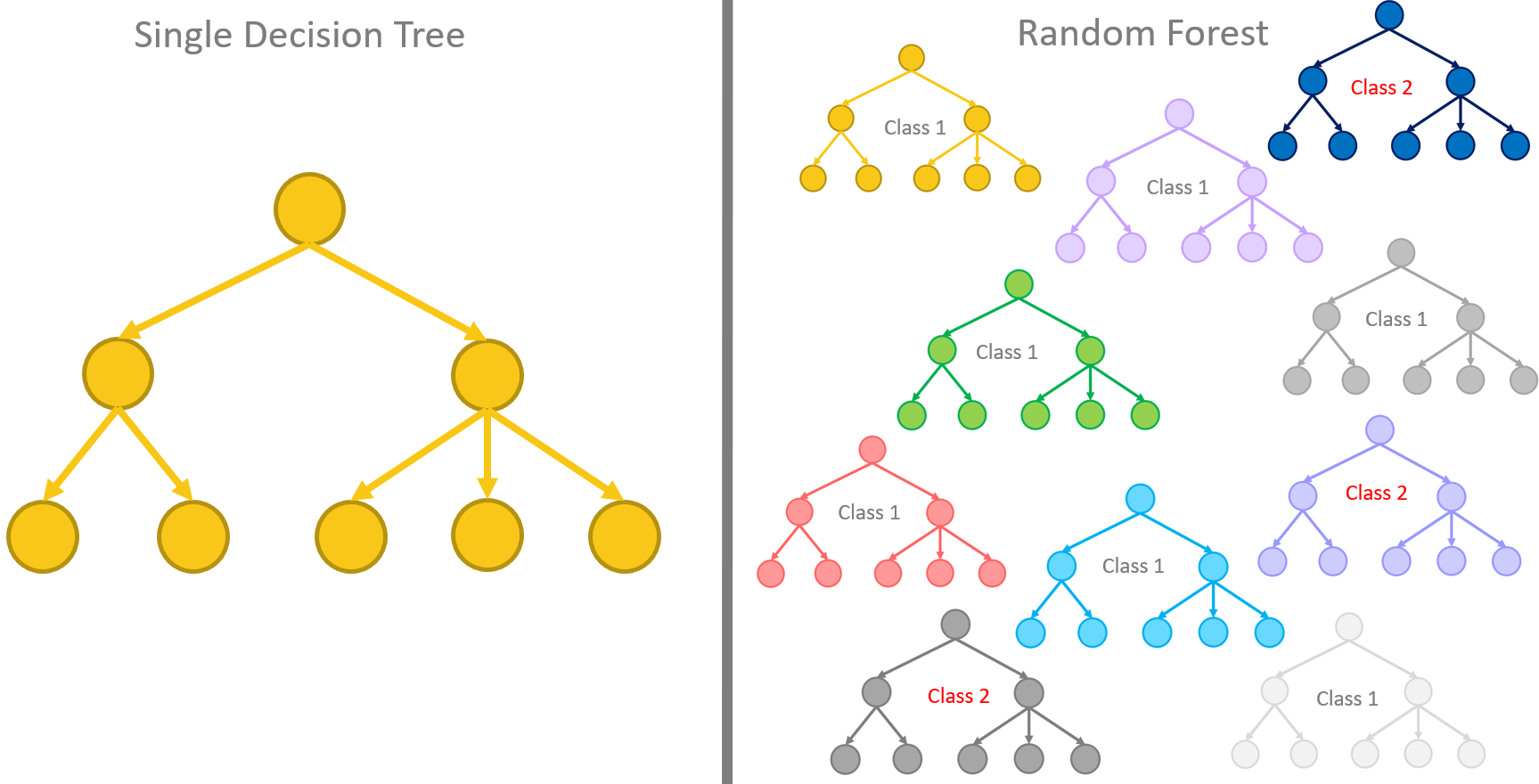

It predicts the mode of the classes for classification. In: Proceeding of the 3rd International Conference on Document Analysis and Recognition, Montreal, Canada, August 14-18, pp. Random Forest is an ensemble learning algorithms that constructs many decision trees during the training. We’ll cover the advantages and disadvantages of random forest sklearn and much more in the following points. Earn Masters, Executive PGP, or Advanced Certificate Programs to fast-track your career. the algorithms can work with both categorical and numerical data, and produce classification and regression trees as well. Enrol for the Machine Learning Course from the World’s top Universities. This training consists of choosing the right questions about the right features placed in the right spot on the tree. In: Machine Learing: Proceedings of the Thirteenth International Conference, pp. An ML algorithm generates and trains the given tree based on the provided dataset. Machine Learning 36, 105–142 (1999)įreund, Y., Shapire, R.: Experiments with a new boosting Algorithm. Neural Computation 11, 1493–1517 (1999)īauer, E., et al.: An Empirical Comparison of Voting Classification Algorithms: Bagging, Boosting, and Variants. As mentioned above, random forests consists of multiple decision trees. Ensemble learning methods reduce variance and improve performance over their constituent learning models. Machine Learning (1997) (in press)īreiman, L.: Out-of-bag estimation (June 30, 2010), īreiman, L.: Predition Games and Arcing Algorithms. Because random forests utilize the results of multiple learners (decisions trees), random forests are a type of ensemble machine learning algorithm. Wolpert, D.H., Macready, W.G.: An Efficient Method to Estimate Bagging’s Generalization Error. Failed to load latest commit information. Random Forest Machine Learning Introduction. Tibshirani, R.: Bias, Variance, and Prediction Error for Classification Rules, Technical Report, Statistics Department, University of Toronto (1996) Nareshnari1 / Crop-recommendation-using-random-forest-machine-learning-algorithm Public. on Pattern Analysis and Machine Intelligence 20(8), 832–844 (1998)īreiman, L.: Using adaptive bagging to debias regressions, Technical Report 547, Statistics Dept.

Ho, T.K.: The random subspace method for constructing decision forests. It is one of the most used algorithms due to its accuracy, simplicity, and flexibility. However, they can also be prone to overfitting, resulting in performance on new data. Decision trees can be incredibly helpful and intuitive ways to classify data. Neural Computation 9, 1545–1588 (1997)ĭietterich, T.: An Experimental Comparison of Three Methods for Constructing Ensembles of Decision Trees: Bagging, Boosting and Randomization. Random forest is a supervised machine learning algorithm. JanuIn this tutorial, you’ll learn what random forests in Scikit-Learn are and how they can be used to classify data.

Machine Learning 24, 123–140 (1996)Īmit, Y., Geman, D.: Shape quantization and recognition with randomized trees.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed